Table of Contents

Six months ago, I thought AI characters in performance ads were a useful side experiment. Worth testing. Not worth shipping. The data forced a different position.

Across thirteen client accounts on Meta and TikTok, in proper A/B tests with equal budgets behind both arms, AI characters now win more head-to-head tests than the human creators we cast, brief and pay. Nine wins out of thirteen. Cost per acquisition between seventeen and twenty-nine percent lower in our top three winners. Ten new ad variants shipped in forty-eight hours, against the two to three weeks it takes to push the same volume through a UGC creator pipeline.

AI characters in performance ads are synthetic on-camera presenters generated by current-generation AI video models, used to do the same job a UGC creator would: deliver the hook, the demo, the testimonial, the call to action. Not the uncanny-valley avatars of 2023. The technology has moved hard, and the conversation about whether they belong in production has moved with it. This post is the readout.

Thirteen accounts. Nine wins. Here is the breakdown.

Last quarter the Admiral Media team ran AI characters head to head against traditional UGC across thirteen client accounts spanning seven verticals. Equal budgets behind both arms. No thumb on the scale. The expected outcome, going in, was that AI characters would hold their own as a fill-in for low-stakes top-of-funnel volume and lose on conversion. That is not what happened.

| Headline metric | AI character vs UGC | What it means in practice |

|---|---|---|

| Accounts where AI matched or beat UGC on conversion rate | 9 of 13 | Majority of categories tested. Not a fluke result. |

| CPA reduction in top three winners | 17% to 29% lower | Material unit economics, not a marginal lift. |

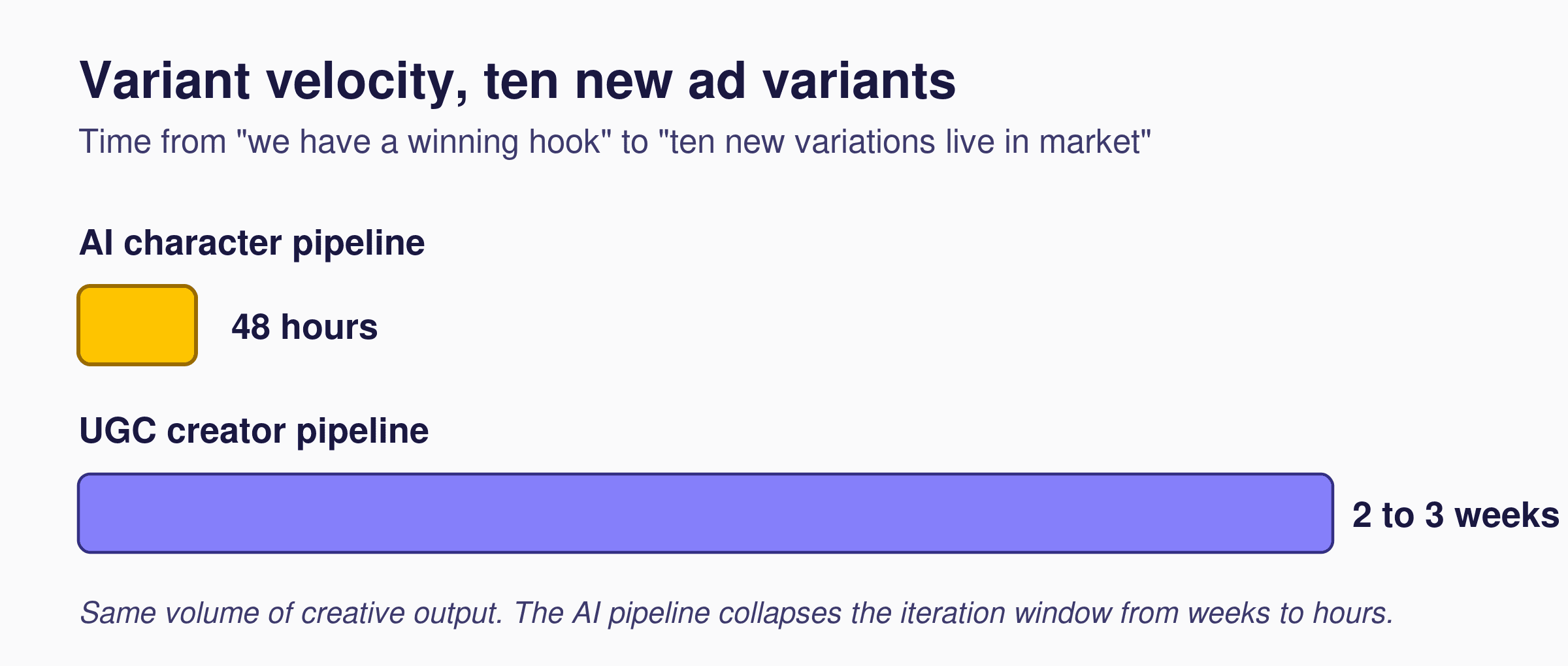

| Variant velocity | 10 in 48 hours | UGC creators typically deliver the same volume in 2 to 3 weeks. |

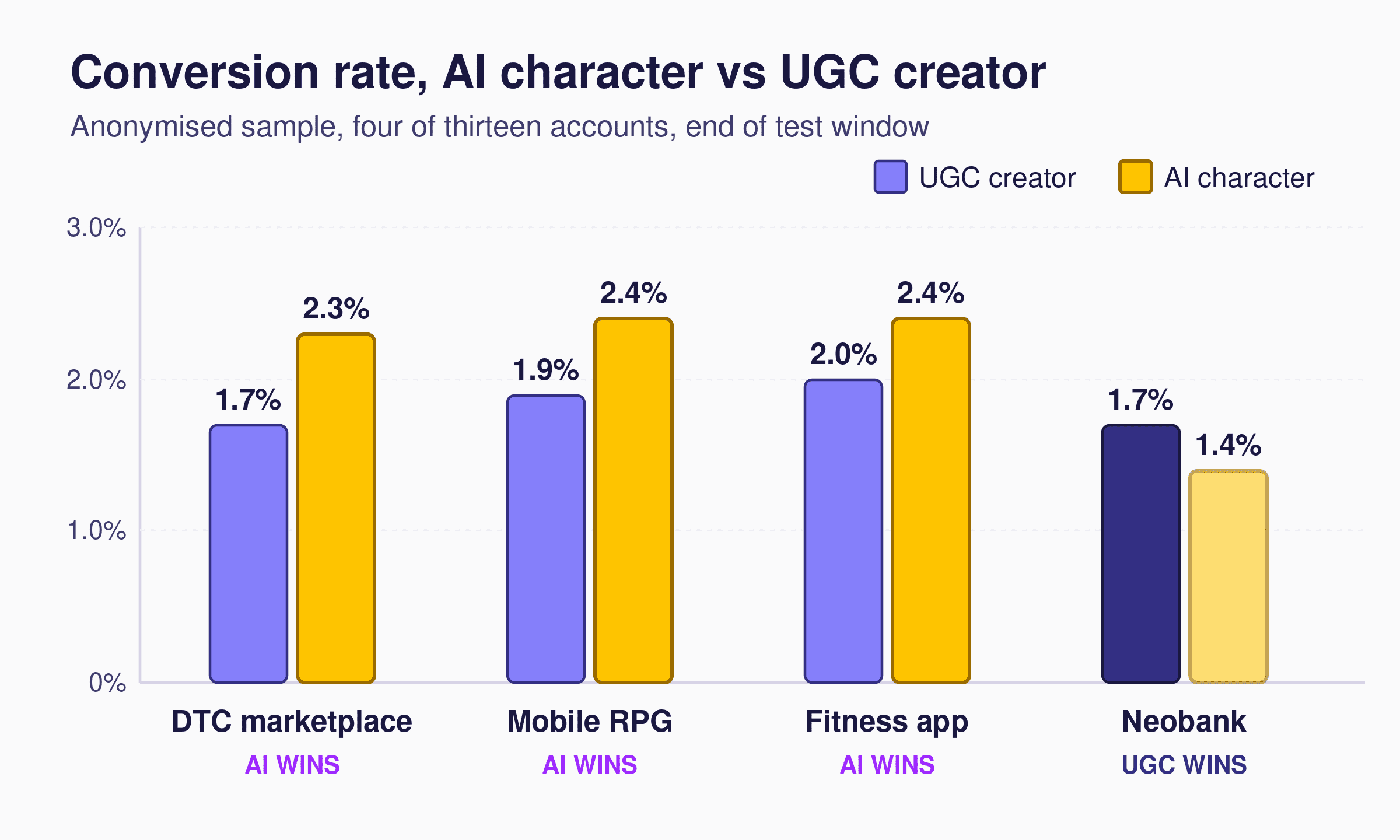

The per-vertical readout sharpens the picture. AI wins are not evenly distributed. They cluster in categories where the on-camera role is “deliver the hook clearly” rather than “be a person you trust with your money.”

| Vertical | UGC conversion rate | AI conversion rate | Winner |

|---|---|---|---|

| DTC marketplace | 1.7% | 2.3% | AI |

| Mobile RPG | 1.9% | 2.4% | AI |

| Fitness app | 2.0% | 2.4% | AI |

| Neobank | 1.7% | 1.4% | UGC |

Anonymised sample, four of thirteen accounts. Real campaigns, equal budgets, end of test window.

We could not replicate it with fintech

The one place AI characters lost, in every test we ran, was high-trust finance. Neobanks, lending, investment apps. Human creators stayed ahead, and not by a small margin. The test was not close.

The reason is structural, not technological. The viewer’s question on a finance ad is “can I trust this person with my money,” and a synthetic on-camera presenter does not yet clear that bar. The trust signal is missing in a way that does not show up in conversion rate alone, it shows up downstream in LTV and complaint rates. We will not run AI characters for finance clients yet. The category boundary matters.

Everywhere else, app installs, e-commerce, mobile gaming, health and fitness, brand DTC, AI characters work. Often better than UGC, occasionally on par. Any team running a synthetic creator strategy should be drawing the fintech line explicitly inside their own brief.

What actually changed in the technology

The thing that flipped this from experiment to production is not one model release. It is the cumulative jump in three places at once: facial expression naturalness, lip-sync accuracy, and persona controllability. Our characters can now hold a 25-year-old fitness instructor speaking German with a Berlin accent, and the same person at 40 explaining a SaaS product in English and Spanish. Same script, same energy, different markets. No casting calls. No reshoot days. No usage rights to renegotiate when the campaign extends.

And they are consistent. Fifty-one ad variations of the same person, shipped same day. That kind of repeatability is structurally hard to get from a freelance creator pipeline, where every additional variant means another booking, another brief, another schedule.

How we make them feel real

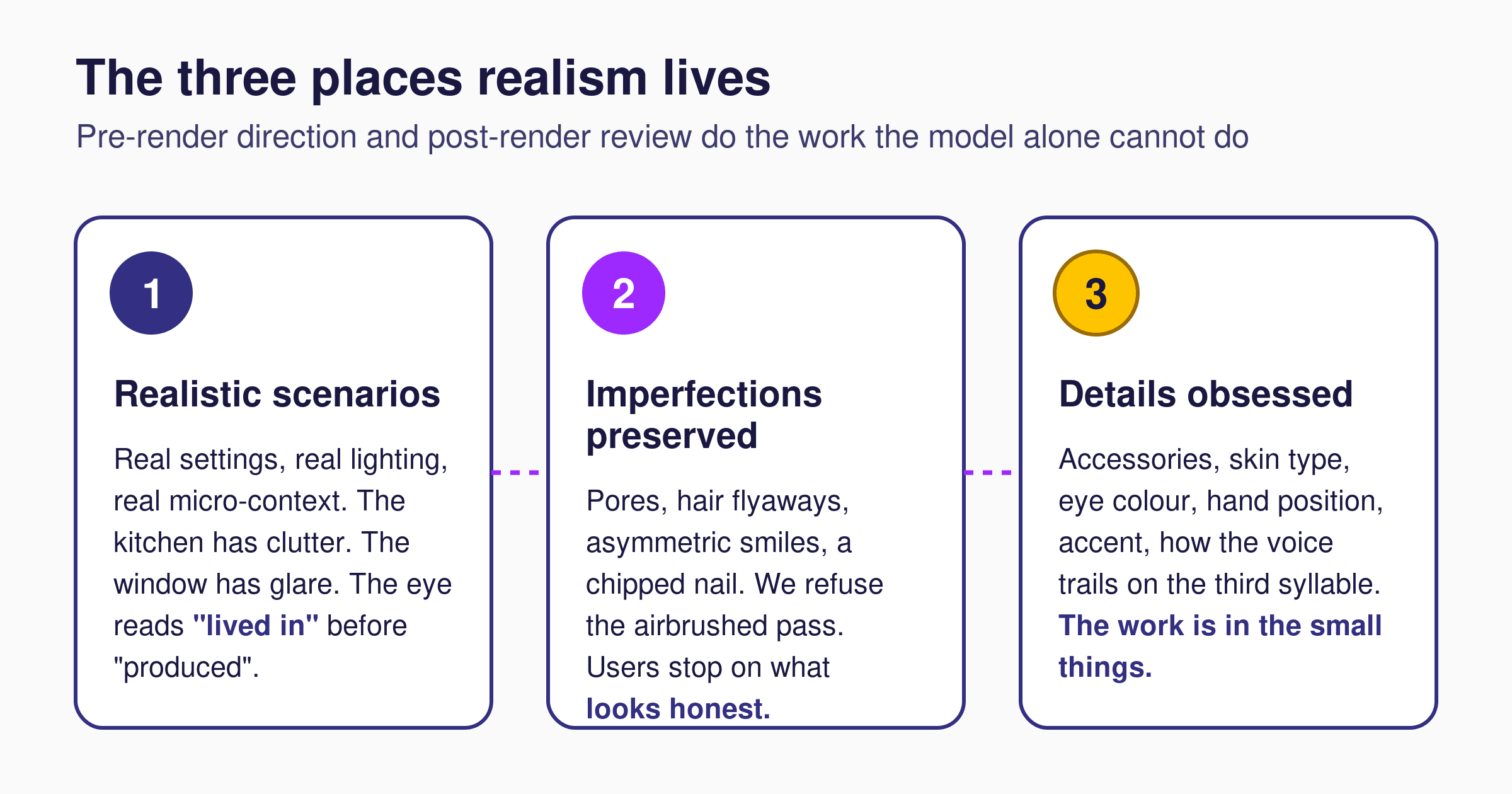

The trick is not the model. It is what we do before and after the model runs. Realism lives in three places, and missing any one of them is what makes AI creative read as fake.

- Realistic scenarios. Real settings, real lighting, real micro-context. The kitchen actually has clutter. The window actually has glare. The desk has the wrong cable in the frame. The eye reads “lived in” before it reads “produced.”

- Imperfections preserved. Pores, hair flyaways, asymmetric smiles, a chipped nail. We refuse the airbrushed pass. The platform algorithms reward what users stop on, and users stop on what looks honest.

- Details obsessed. Accessories, skin type, eye colour, hand position, accent, even how the voice trails on the third syllable. The work is in the small things. A character that is “almost right” reads worse than no character at all.

This is the operating model behind the Admiral Media AI Creative Factory: a build pipeline where character setup, scenario direction, and post-render review are treated as separate craft steps, not a single prompt.

The real story is speed

The lift on conversion rate is the headline. The story underneath the headline is variant velocity. When a hook is winning on Meta or TikTok, the right move is to ship ten variations of it within forty-eight hours, so the algorithm has fresh signal before fatigue sets in.

With human creators, that same iteration cycle takes two to three weeks. In performance marketing, two weeks is a lifetime. By the time the variants land, the original hook has decayed and the auction has moved on.

The AI character pipeline collapses that iteration window from weeks to hours. Winning hooks get exploited at the cadence the algorithms actually reward. That, more than any single conversion-rate point, is the structural advantage. It is also the reason the conversion-rate lead grew rather than flattened across our multi-week tests: faster iteration kept the AI arm closer to the front of the auction every week the test ran.

The Admiral Media AI Character Production Framework

The Admiral Media team has formalised the operating model behind the AI Creative Factory into a named framework, used on every account that runs synthetic creators. It is the sequence we wish we had been given six months ago.

- Vertical fit decision. Decide whether AI characters belong on this account at all. High-trust finance and regulated medical: no, not yet. App installs, e-commerce, gaming, health and fitness, brand DTC: yes, run the test.

- Persona library. Build a controlled library of characters tuned to the brand’s audience segments by age, language, accent, and context, before any creative is shipped. The persona is the asset, not the individual ad.

- Scenario direction. Direct the scene the same way a UGC director would, with a real setting brief, a real prop list, and a real micro-context. AI gives you the talent, not the direction. The direction still has to be made.

- Imperfection pass. Refuse the airbrushed render. Tune skin texture, eye asymmetry, hair flyaways, and lighting irregularity until the eye reads honesty. The platform algorithms reward what users stop on.

- Variant production. Once the character and scenario hold, ship ten or more variants of the winning hook in under forty-eight hours. Variant velocity is the actual moat, not the rendering quality.

- Human review gate. Every shipped asset clears a human reviewer before it touches a live campaign. The reviewer checks brand consistency, scene plausibility, and category fit. This is the step that keeps the Factory from shipping work that looks artificial.

What this means for you

Two simple statements, no hedging.

One. If you ship volume creative on Meta or TikTok and you are not testing AI characters yet, you are leaving lift and CPA on the table on roughly seven out of ten accounts. The cost of finding out is one quarter of split-test budget. The cost of not finding out is paying a UGC tax on every campaign while a category of synthetic creative quietly outperforms it on the same auction.

Two. If you are testing them, the next move is to run the right vertical for it. App installs, e-commerce, mobile gaming, health and fitness, brand DTC. Save fintech and high-trust categories for human creators. Keep the lines clean.

How to start the test this quarter

The shortest path to a real answer is a simple one. Pick one of your top three campaigns. Hold the audience, the bidding, the budget, and the hook structure constant. Replace the on-camera talent in one ad set with an AI character that matches the same brief. Run for two weeks. Read the conversion rate, the CPA, and the comment-section sentiment side by side.

That is the entire test. No new measurement stack. No new agency relationship. No quarterly transformation programme. The point of the framework above is to compress that test cycle from a project into a sprint, and to make sure the result is clean enough to act on the next time the planning meeting opens.

If the AI arm wins, you have a permanent variant velocity advantage on that campaign. If UGC wins, you have evidence to hand to the team and a reason to keep the human creator pipeline funded. Either result is useful. The only outcome that is not useful is not running the test.

Want the cuts?

If you want to see what the work actually looks like, email andre@admiral.media with the subject line “reel” and the Admiral Media team will send you the AI character cuts shipped this quarter across gaming, e-commerce and health. Real campaigns. Real results. No demo call required.

For deeper context on the work, see the Fastic case study, the NeuroNation case study, and the NeuroNation creative framework. For channel context behind the variant-velocity argument, Meta’s creative best practices documentation and TikTok’s creative best practices guide remain the canonical references on creative diversity at scale. Channel-level deep dives are available on Facebook Ads for mobile apps, TikTok Ads for mobile apps, and the Admiral Media performance marketing overview.

Frequently Asked Questions

Do AI characters actually outperform UGC creators?

Across thirteen Admiral Media client accounts run as paired A/B tests on Meta and TikTok last quarter, AI characters matched or beat UGC creators on conversion rate in nine accounts. Cost per acquisition was 17 to 29 percent lower in the top three winners. The lift was concentrated in app installs, e-commerce, gaming, and health and fitness. The exception is high-trust finance, where human creators remained ahead in every test we ran.

Why did AI characters lose in fintech?

The reason is structural, not technological. On a finance ad, the viewer’s underlying question is “can I trust this person with my money?” A synthetic on-camera presenter does not yet clear that trust bar, and the gap shows up downstream in LTV and complaint rates, not just in conversion rate. Admiral Media will not run AI characters for finance or other high-trust regulated categories yet. Human creators remain the right call there.

How does Admiral Media build AI characters that do not look fake?

Realism lives in three places: realistic scenarios with real lighting and micro-context, preserved imperfections such as pores and hair flyaways, and obsessive detail tuning across accessories, skin, eye colour, hand position, and accent. A human reviewer signs off every asset before it ships. The model is the smallest part of the work. Pre-render direction and post-render review are where the realism is actually built.

How fast can Admiral Media produce AI character variants?

Ten new variants of a winning hook in under 48 hours. The same volume produced through a UGC creator pipeline typically takes two to three weeks. Variant velocity, not rendering quality, is the actual structural advantage of AI character production. It is the reason the conversion-rate lead compounded across multi-week tests rather than flattening.

Should every brand switch from UGC to AI characters?

No. The category fit decision is the first step in the framework. AI characters work in app installs, e-commerce, mobile gaming, health and fitness, and brand DTC, where the on-camera role is to deliver the hook clearly. They do not work yet in high-trust finance, where the viewer is asking whether to hand over money and the trust signal of a real person is structurally important. The right approach is to run AI as a paired test arm against UGC and let the data decide, vertical by vertical.

Are AI characters allowed in performance ads on Meta and TikTok?

Yes. Both Meta and TikTok permit AI-generated creative in advertising, with platform-specific guidance on disclosure and content authenticity. Most regions also have evolving regulatory positions on AI-generated content in ads, including transparency obligations under the EU AI Act. Admiral Media will publish a separate, dedicated post on what those rules mean for performance teams and how the team is testing them in production.